Description

Floating-point computing has evolved from pure software emulation to external co-processors and, ultimately, to fully integrated execution units within modern CPUs and GPUs. The rise of GPUs marked a major inflexion point, providing massive parallelism essential for AI workloads and high-performance computing (HPC). Today's heterogeneous architectures leverage a mix of CPUs, GPUs, and domain-specific accelerators to extract maximum performance. Increasingly cohesive integration across these compute elements—characteristic of recent system designs—improves programmability, data movement, and overall throughput, which is especially critical in AI and HPC deployments. Meanwhile, escalating power constraints continue to push the design of more energy-efficient FPUs and low-power compute fabrics. A central challenge is navigating the trade-off between numerical precision and performance, as not all applications can tolerate aggressive precision reduction. Additionally, architects must balance the benefits of highly specialized hardware tuned for particular workloads against the flexibility and broader applicability of general-purpose CPU resources.

AI and Reduced Precision

AI has increased demand for reduced-precision floating-point types (e.g., FP16, BF16, FP8), as many AI models can operate with lower numerical precision to improve speed and energy efficiency. Specialized hardware, such as NVIDIA's Tensor Cores, support these operations. AI workloads leverage reduced precision and parallelism, while scientific computing often still requires the accuracy of double-precision (FP64). Mixed-precision approaches are bridging the gap, enabling faster simulations without sacrificing the required accuracy.

floatrix

Floatrix is a vendor-neutral tool that enables exhaustive end-to-end verification for floating-point hardware. Using the powerful language of SystemVerilog Assertions (SVA), our checkers have been optimised for performance to enable end-to-end sign-off using compositional formal methods techniques, guaranteeing a much higher proof convergence on micro-architectural implementations than would be possible with alternative techniques.

With automation, intelligent debug and supported by six-dimensional coverage and reporting via SURF, we have brought what was missing for end-to-end sign-off of floating-point designs implemented as FP4, FP8, bfloat16, and compliance with IEE754 standard (16, 32 and 64-bit precisions).

Key Highlights

- GUI-Based

- Report Dashboard

- IEEE 754 debug support for most operations; smart regression flow; intelligent debugger

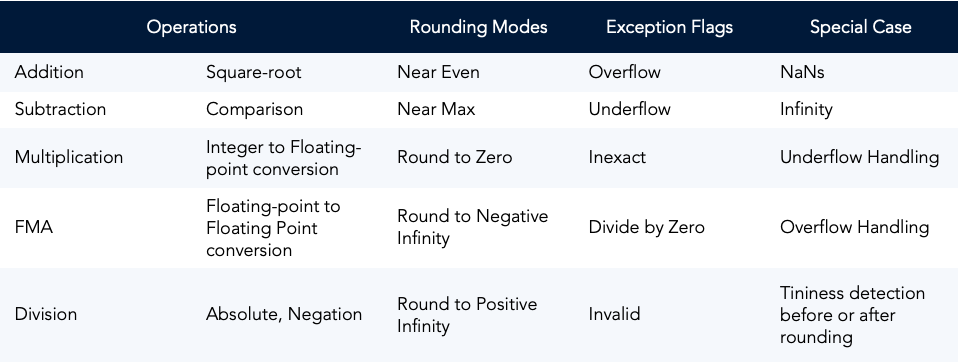

- Various rounding modes available

- Out-of the box support for half, single, and double precisions; easily configurable and customisable

- Vendor Neutral

Supported Operations

Implemented in floatrix

Who should view this?

Designers

Architects

Managers

Verification Engineers

What you will learn?

How to use floatrix?

What designs are verifiable by floatrix?

Example Demos